Going High Bandwidth Multi-Cloud with Collocations

It is no secret that cloud adoption is growing at a fast rate. Industry experts, like Gartner, estimate the cloud infrastructure as-a-service (IaaS) market to grow to $76.6B by 2022. While making the transition, customers are often adopting multiple public clouds, in fact, more than 81% of respondents in a Gartner survey are saying that they use two or more public cloud providers, proving that multi-cloud is real.

In this journey to the public cloud, organizations connect their cloud workloads and services from their on-premise infrastructure using the public Internet or dedicated connectivity. Many enterprise customers typically choose the option of dedicated connectivity to access their mission critical applications. These are the enterprises who have either one or a combination of the below requirements:

- Consume MPLS in their on-premise infrastructure and want to extend the same to cloud

- Need higher bandwidth throughput for applications working with large data sets

- Desire lower latency for applications using real time feeds

- Desire tighter SLAs for better user experience for public cloud workloads

Each of the public cloud providers have their own take on dedicated connectivity options:

- AWS Direct Connect

- Microsoft Azure Expressroute

- Google Cloud Dedicated Interconnect

- Oracle Cloud Infrastructure FastConnect

Every public cloud provider has varying bandwidth options for the dedicated connectivity ranging from 50Mbps to 10Gbps, and as high as 100Gbps for connecting with Google Cloud.

These dedicated connectivity options are provided by the cloud providers in partnership with colocation providers like Equinix, Coresite, Digital Realty, etc. The colocation facilities are acting as the new edge for the cloud network. Enterprises extend their networks to a colocation facility where they are interconnected into the cloud provider’s network by virtue of one of the above dedicated connectivity options.

Today, we will take the example of AWS Direct Connect (DX) as a dedicated connectivity option. AWS DX since its launch in 2012 has been a popular service that enterprises use to connect their on-premise infrastructure with one or more dedicated connections of 1Gbps or 10Gbps. The DX connection provides enterprises with a Layer 3 virtual interface (VIF), which extends from the colocation facility into AWS. Enterprises terminate the DX connection onto their VPCs using a private virtual interface or a public virtual interface to connect to AWS public services, such as Amazon S3 or Amazon Kinesis. Frequently, enterprises grow their cloud footprint quickly and with multiple VPCs. Consequently, they run into the challenge of extending the AWS DX into each of the VPCs. It might not be a very scalable option to have the private VIF connection to each VPC. To mitigate scalability challenges, AWS introduced the concept of Transit Gateway (TGW) with the ability to connect a new type of virtual interface called Transit VIF on one end and attach the VPCs on the other. The Transit VIF can be used in combination with a Direct Connect Gateway (DCG), which is attached to a Transit Gateway.

The Transit Gateway design however has its own set of limitations and restrictions, as listed below:

- Each Direct Connect connection represents a single Transit VIF

- Administrators need to manually enter the CIDR ranges of the VPCs on TGW for advertising to the DCG, which will then be advertised to the on-premise routers

- Maximum number of routes advertised from TGW to on-premise routers is limited to 20

- Maximum number of routes advertised from on-premise routers to cloud is limited to 100

Furthermore, as I indicated earlier, most enterprises won’t stay restricted to a single public cloud provider, but would rather distribute their workloads across AWS, Azure and GCP. While they extend connectivity into the multi-cloud environment, enterprises are also concerned about securing the traffic to and from the on-premise network, as well as across the cloud instances.

How do we solve this at Alkira?

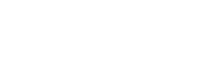

At Alkira our mantra is to simplify complex problems while providing a secure, scalable architecture for enterprise customers.Alkira Cloud Area Networking is the first global unified multi-cloud network delivered as-a-service. It is a globally distributed virtual infrastructure of Alkira Cloud Exchange Points™ (Alkira CXPs), which are virtual multi-cloud points of presence with full routing and network services capabilities. Alkira allows enterprise customers to easily onboard their on-premise infrastructure leveraging a variety of methods, including AWS Direct Connect, into the Alkira Cloud Exchange Points. At the same time, customers also connect their cloud workloads (VPCs, vNets) to the Alkira Cloud Exchange Points achieving seamless high-bandwidth multi-cloud connectivity in minutes. Alkira’s solution also allows customers to spin up network and security services, like the Palo Alto Networks VM-Series Firewall and define granular intent-based policies for enforcing business logic around bringing enterprise-grade security to cloud and multi-cloud environments.

Speaking with many customers, we also often hear about the lack of network visibility into their infrastructure. In particular for this case, customers need the visibility into how the AWS Direct Connect is being utilized, so they can provision additional connections for even higher multi-cloud throughput and higher availability. Alkira Cloud Services Exchange Portal provides customers with visibility into the health of the connections, bandwidth utilization on each connection, deeper visibility into application traffic, top users, etc.

To summarize, Alkira provides the following advantages to customers with AWS Direct Connect:

- Allows high bandwidth connectivity to the multi-cloud workloads without the need to have dedicated connectivity individually for each and every public cloud

- Orchestrates the underlying AWS Direct Connect components and requires no manual configuration to propagate routes between cloud and on-premise environments

- Removes limitations on the number of routes that can be exchanged between the on-premise and cloud environments

- Ability to insert network services, like firewall, to inspect traffic between on-premise and cloud environments

- Deeper operational visibility into the AWS Direct Connect to provide visibility into the usage, monitoring and troubleshooting

Contact us to discuss how Alkira helps simplify the network complexity and helps provide a superior solution for high bandwidth connectivity to the multi-cloud world.

Discover more of Alkira and download a CTO whitepaper at https://www.alkira.com/discover

Hear our customers describe their multi-cloud journey on a Packet Pushers podcast at https://www.alkira.com/discover/heavynetworking/